Conquered by Clippy

April 2023

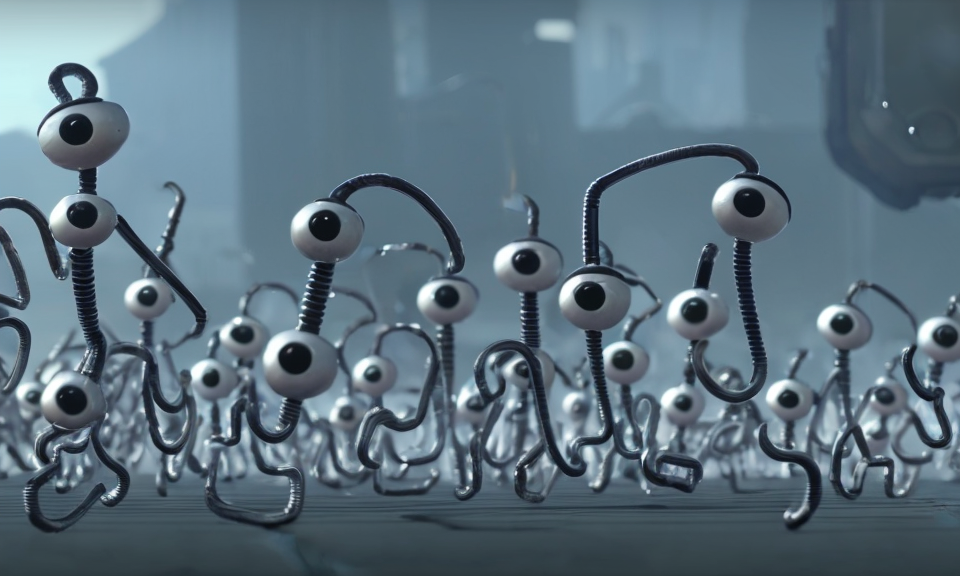

Every semester, the Media Lab at MIT throws a 99Fridays party, which is a celebration of self-expression and an explosion of creative energy. For our contribution, we used Dreambooth to fine-tune stable diffusion on images of Clippy. These images were gathered by grabbing frames from youtube videos of Clippy. We began by Dreamboothing using images of Clippy in all his configurations (turning into a bicycle, etc) - but that turned out to produce pretty poor results that weren't very visually recognizable as Clippy.

For the next attempt, we used only images of Clippy in "paperclip" form, and added in some higher-resolution webscraped images as well in order to produce less blurry output. I also photoshopped some photos of Clippy into more diverse backgrounds to help reduce hyper-localization onto the few backgrounds in the Clippy images I had found.

This worked much better and produced the video above.

As usual, we generated at lower resolution using our Stable Diffusion pipeline, then upscaled to 4k using Real-ESRGAN, and then interpolated between each frame using FILM.

Artists

Anna Buchele, Erik Strand, Serge Vasylechko